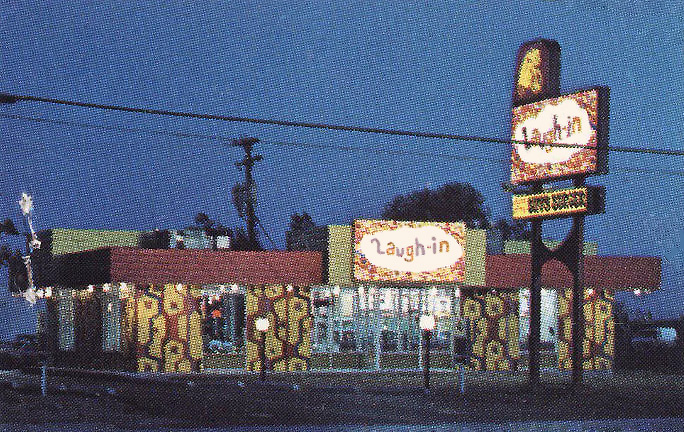

The restaurants called “Laugh-In,” based on the hit Dan Rowan and Dick Martin TV show, formed a teensy blip in an enterprise that culminated with big-time gambling casinos. [1969 menu cover]

Perhaps because the TV show was such an instant hit, it inspired the idea that the same enthusiasm would transfer over to the restaurant. It didn’t.

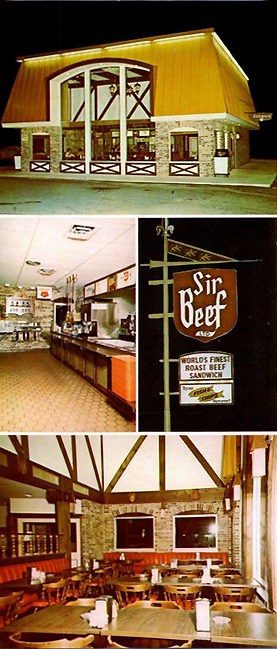

The chain was created in 1969, under the Lum’s restaurants umbrella, by brothers Clifford and Stuart Perlman who had built the successful Lum’s chain from a small Florida hot dog stand thirteen years earlier. The brothers adopted the Laugh-In concept and franchise system not long after they had begun another chain called Abners Beef House in 1968.

At that time the parent company, Lum’s Inc., had 300 locations. The brothers decided to list it on the New York Stock Exchange. In addition to the three restaurant chains, they also owned a chain of Army-Navy stores, meat packing plants, honeymoon resorts in the Poconos, and a large country club in Miami, the city where the corporation was located.

Selling stock in Lum’s, Inc. was a way to amass money to fulfill the brothers’ ambition of buying Caesar’s Palace, the Las Vegas hotel and casino that had opened in 1966.

When they created Laugh-In, financial analysts warned investors that counting on the continuing popularity of a TV show was risky. What if it went off the air? Perhaps that did worry buyers. Forty franchises were expected to be sold in 1969, but the actual total for that year was probably lower and the overall total number of units ever opened is unknown. [above left, Rowan, right, Martin]

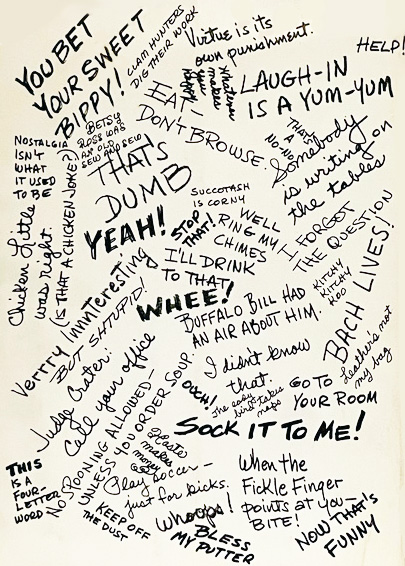

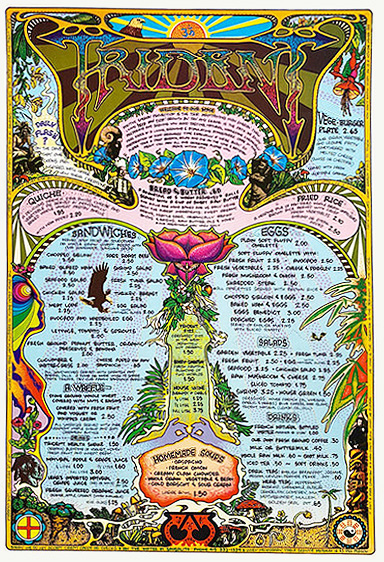

Laugh-In relied heavily on the goofiness of its namesake TV show for the design of its units, fronting its flat-roofed concrete-block-style buildings with wild patterns and colors. Table tops were manufactured with imitation graffiti reflecting phrases from the show. [Below, table-top graffiti as shown on the back of menu above]

Everything was meant to appeal to youthful customers. According to an early advertisement for franchisees, Laugh-In was “a fun restaurant, designed for today’s vast young-minded, leisure-rich market.”

Additionally it advertised that it used a “proven food format” as employed by Lum’s. Lum’s had a signature dish, hot dogs cooked in beer, and it also sold beer. Laugh-In did not. But judging from their menus, neither Abners nor Laugh-In offered anything special in the way of food. Despite the “funny” names, Laugh-In selections were the same as those found in many other casual restaurants. Then there’s the fundamental question of whether customers choose what to order according to how funny the name is.

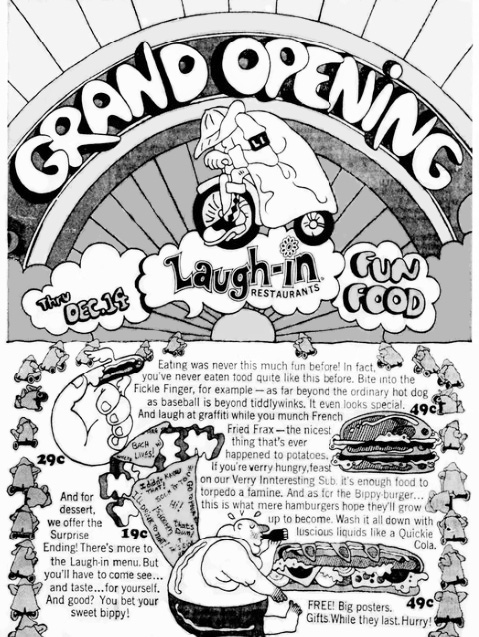

Judging from a 1969 advertisement for Abner’s franchisees, the Lum’s corporation was not especially good at presenting desirable-sounding food. The ad exclaimed over its menu’s “hunks of steak in a long fun bun” and “good things to drink, too, a malt, milk, a soda, coffee and tea.” As for Laugh-In, despite the funny names (Bippy Burgers, Fickle Fingers, Here Comes The Judge), its menu boiled down to the usual assortment of sandwiches, deep fried fish, onion rings, and a few oddities such as “tomato and egg slices” and “cheese on a bed of lettuce.”

The first Laugh-In restaurant opened in Hollywood FL in December, 1969. A few months later 25 more franchises were said to have been sold around the country. But #1 did not do at all well. It closed just short of a year later, replaced with an “Adult Art Theatre.” [above, partial advertisement for the grand opening]

Overall, the brothers fared better with another big venture, Caesar’s Palace, acquired a couple of months before the first Laugh-In opened. Caesar’s Palace had a rough time at the beginning of their ownership, and the stock of Lum’s, Inc., its corporate owner, fell sharply. The brothers raised $4 million by selling off most of their restaurants, including Laugh-Ins, in 1971. But they ran into trouble attempting to open another casino in Atlantic City. New Jersey’s Casino Control Commission insisted that because the Perlmans had had financial dealings with reputed organized crime figures, they had to resign if a permanent permit was to be issued. Stockholders voted to buy them out, paying almost $100 million for their stock.

A few Laugh-In restaurants probably continued on for a while, though it had to be a blow when the show went off the air in 1973. The longest survivor may have been Jeff’s Laugh-In in Chicago, lasting until 1988.

© Jan Whitaker, 2023

It's great to hear from readers and I take time to answer queries. I can't always find what you are looking for, but I do appreciate getting thank yous no matter what the outcome.

It's great to hear from readers and I take time to answer queries. I can't always find what you are looking for, but I do appreciate getting thank yous no matter what the outcome.